Achtung Baby!

Atten-Hut!

I went looking for movie clips of soldiers called to attention. This one’s funny. You can’t argue with (the great) Christopher Walken:

Do I make myself clear?

Sergeant Merwin J Toomey https://youtu.be/WvFpUJfPASc

The particular formalities of military communication are interesting. But it’s the impetus for them I’m interested in. What basic universal realities underly and subsequently generate formalized communication structures? Structures designed to enable message formulation that’s clear enough to maximize the chances that messages are received and understood correctly, and as a bonus, that enable the sender to know, to get acknowledgement, that the message was understood, correctly?

X-Ray Delta One, this is Mission Control. Roger your two-zero-one-three. Sorry you fellows are having a bit of trouble. We are reviewing our telemetric information in our mission simulator and will advise. Roger your plan to go E.V.A. and replace alpha-echo-three-five unit prior to failure.

2001: A Space Odyssey https://youtu.be/ZRvlaabVzag

The call to attention is the start of any formalized communication structure. Let’s come back to that and other aspects of such structure after first jumping into the chaos that requires it. A few meandering stories follow. Hopefully they ring true with you.

In this post is a collection of examples of focused attention and failures of attention, of difficulties in achieving attention, and then, significant risks of communication failure even when attention is achieved, the built-in limitations of human attention, and the necessity of attention nonetheless. If you read this post to the end, I think you'll appreciate attention in ways that may not have occurred to you before. The entire discussion serves as an introduction to a software development proposal for development of attention-focusing "TGN rigs" within digital modeled environments in industrial domains like mechanical engineering, and the AECO Architecture, Engineering, Construction and Operations industry.

Military Communication

I’ll dwell on the military aspect of this just a bit more. First, more movies.

A typical scene:

...a room full of soldiers. It's noisy, everyone in conversation. Rowdy or not but it's disordered. From the point of view of command and control it's chaotic. People standing around in myriad conversations. An officer of a certain rank enters the room. The first to see him, immediately, calls the room to attention. Conversation ends. All turn to face the officer.

How that happens is interesting in itself, a cascade effect in a crowd of individuals observing in peripheral vision the behavior of others who one by one are focusing their attention, a cascade effect that seems automatic and instant when you’re in it participating. But slow motion film would show the cascade in ways otherwise unseen, I suspect. Probably the eyes are drawn first to the voice calling attention, then shifting toward the target that person (the caller) is calling attention to.

With attention established, the officer announces some message/order/instruction, or otherwise dismisses the call to attention with something like "as you were" or "carry on". Depending on the gravity of the message, if any, the officer will deliver it with the room still at attention or preface with "at ease" giving those at attention permission to stand with a more relaxed posture.

It’s funny to think of a military without these conventions.

An officer walks into a bar... (this is a joke)

But who pays attention? I mean, OK, maybe things should rely entirely on charisma. There’s that:

Military communication has a certain format. A set of conventions, methods. But these forms are not primarily about protocol formalities. The forms, yes, are ceremonialized, certainly, and they formalize hierarchy. But it’s the driving force beneath this that’s of greater interest. Hierarchy and communication structures exist for a reason. The purpose is to maximize the effectiveness of commands issued from an authority. The commands have to be communicated, listened to, and understood, efficiently.

This is easier said than done and involves an array of methods.

Conventions of military communication are designed in response to unacceptable communication breakdown. Against the inevitable entropy and chaos that guarantees miscommunication, mitigating structures that anticipate and resolve the most common causes of communication failure are designed and made convention. I learned some of these conventions in the Navy where I operated radar and navigation equipment and talked over radios. I remember this sometimes.

You call their name. That’s a really good way to get someone’s attention. if you want my attention, you say:

“hey, Rob”.

Then I’ll look at you and answer

Yeah! (volume depends on if you’re near or far)

And then you’ll say:

blah blah blah

And then I’ll say,

yeah, blah, OK

This is the basic structure that everyone pretty much understands by the time they get to kindergarten. But it fails all the time nonetheless for countless reasons. Pretty much the entire corpus of human literature is an exploration of the tragicomedy of why this simple structure breaks down. All the time.

In the military, formal structures enforce this simple setup. And it’s taught explicitly, in basic and technical training.

First of all, if you’re on a radio, you say the callsign of the one you’re calling, first. Then you identify yourself. You say your callsign, second. It has to be in that order: the one you’re calling first, to get their attention, and yourself, the one calling, second.

X-Ray Delta 1, this is Whiskey 7 November.

In movies this is often done wrong:

This is base station calling blah blah blah…

Say the name of the one you’re calling first, to get their attention. They’re busy doing other things. If you say your name first, that’s just background noise. Your target won’t direct their attention your way. You’re zoned out. To break through that, call their name first.

Same with my dog, I call his name. When he’s running around in a field with his nose buried in piles of aroma in every direction, I don’t say:

This is rob calling Blake

I say:

Blake!

He knows my voice, so I don’t have to say “Blake this is rob.”

Blake shows I’ve got his attention. He looks at me.

I tell him to come.

He runs over.

The same with military communication. You ask for the attention of the one you intend to communicate with. They acknowledge you have their attention. Then you communicate.

This was the ship I was on (same type). I flew in the helicopter once.

Attention!

But attention to what?

Have you ever been driving and your passenger yells Watch out! but doesn’t point at anything?

Watch out for what?

The mind is constantly occupied with attempting to figure out what to pay attention to, and therefore what to ignore. And A LOT is ignored. Almost everything actually. When suddenly one person sees something unexpected, the other person may be absolutely unable to see it. The magnitude of this problem is under-appreciated generally. Recent work (2010) by Daniel Simon illustrates the problem starkly: https://en.wikipedia.org/wiki/The_Invisible_Gorilla. His experiments took many by surprise and undermined common assumptions in perceptual psychology.

When our attention is focused on a task, we’re blinded (substantially, and to an unexpected degree) to everything not relevant to that task.

Here’s Daniel Simons discussing his experiments that revealed surprising limitations in our (human) attention focusing mechanism. Specifically, limitations in our inability to divert attention to major situational changes as they occur when attention is focused already on some task. We can’t even notice the changes. So we recognize no inducement, no need, to redirect attention and change focus. The talk is well worth the time (19 minutes):

“We intuitively believe that the mechanisms of attention will automatically bring to focus things that matter to us, and it turns out that intuition is dangerously wrong.”

Daniel Simons https://youtu.be/eb4TM19DYDY?t=240 (4:17)

Simons, from his talk:

“But first, what I want to do is test your own intuitions. I want to show you a task that you might intuitively think is pretty difficult, but, as will turn out, you actually can be pretty good at doing it. Some of you may have seen this before. If you have, that’ll make it that much easier for you, but you should still try your best to do it. The task is simple: all you have to do is count how many times three people wearing white shirts pass a basketball. They’d be running around, passing the ball, dribbling it. Sometimes they’re going to fake passes to make it harder to keep track, but all you have to do is count how many total times they passed the ball. Now, we are going to make it that much harder by also having 3 people wearing black shirts passing their own ball, and you’re not supposed to count those; you’re only supposed to count the passes by the players wearing white. OK, let’s give it a shot.”

Daniel Simons https://youtu.be/eb4TM19DYDY?t=114 (1:54)

(Video)

“OK, how many people got 15 passes? Some of you’ll probably get 14, some of you’ll probably get 16. For those of you who haven’t seen this before, did anybody spot a gorilla? (Laughter) Did anybody not spot a gorilla?(Laughter) Good. OK. Here’s where he is. Let me just rewind, and you can see what happens here. There’s the gorilla.(Laughter) The gorilla is walking thought the scene, it stops there, thumps his chest for a second (Laughter) ducks under a pass. The gorilla is there for 9 seconds, but when you do this under more controlled conditions in a lab where you bring in one person at a time, you find that fully 50% of people don’t notice the gorilla. In fact, if you ask them about it, they’d say, “What?” We had people accuse us of showing them different videos before and after. (Laughter) Why is that?

This is a compelling example for a number of reasons, but mostly it’s compelling because it’s counter-intuitive. It’s hard to imagine that a person in a gorilla suit could be in the middle of the video for 9 seconds, fully visible, right there in front of you, and you could still not see it. We intuitively believe that the mechanisms of attention will automatically bring to focus things that matter to us, and it turns out that intuition is dangerously wrong.“

https://youtu.be/eb4TM19DYDY?t=188

“Just to illustrate how strongly people hold this conviction, if you ask people just before showing them this video, you ask them, “Would you notice if I’d showed you a video of people passing basketballs, and you had to do counting, and you had this person in the gorilla suit go through the middle, would people notice it? This was a study done by Bonnie Angelone and Dan Levin, and what they found was that fully 90% said yes, and they were sure of it.”

https://youtu.be/eb4TM19DYDY?t=268

People are confident that when something unexpected or distinctive happens right in front of you, you’ll automatically notice it, and it’ll draw attention to itself. Our intuitions about what we notice are consistently wrong under these conditions.

https://youtu.be/eb4TM19DYDY?t=289

Assuming situational awareness in ambiguous environments

There has been one fatal air crash in the history of aviation in my hometown, Lexington, Kentucky. The cause of the crash followed directly from inattentional blindness of at least three people.

This is hard to watch but makes the point in the strongest possible way:

If you search for information about Comair flight 5191 you’ll find no shortage of comments about who to blame. I have my own opinion but let’s focus on the gorilla in the room:

a gorilla in the room will be unnoticeable to the majority of people focused on tasks other than detecting a gorilla in the room.

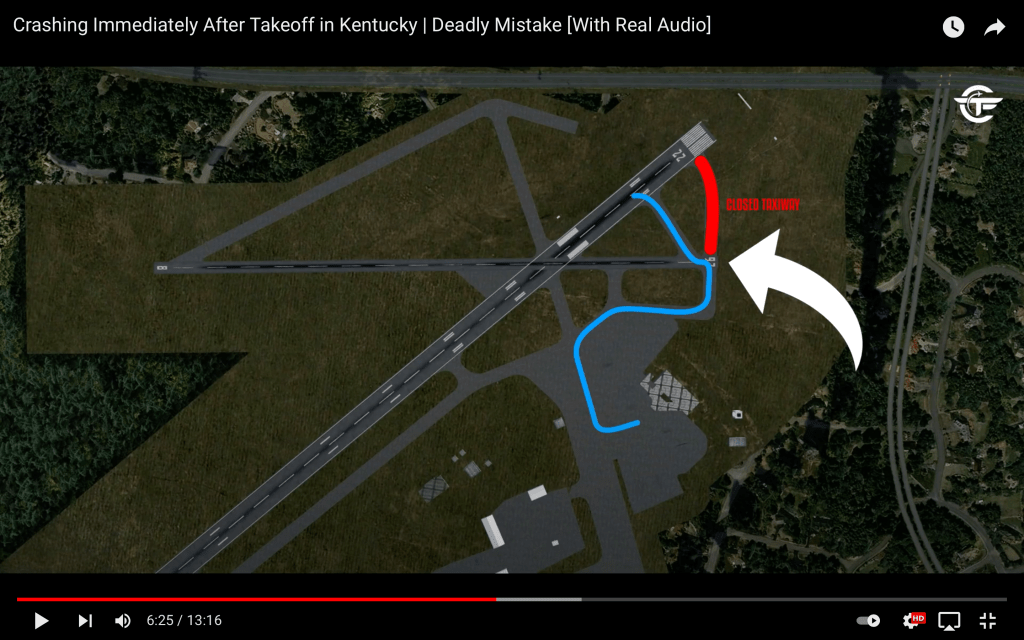

See the graphic at 6:25 in the video which shows a map of the aircraft taxi path from the departure gate to runway 22 crossing first over runway 26:

The flight was supposed to take flight from runway 22, which as usual at Lexington required taxiing over runway 26 to get to 22. The normal taxi path from 26 to 22, marked in red on the map above, was closed for re-construction. Attention was not adequately drawn to this gorilla of potential confusion about the crossover from 26 to 22.

Simply stunning, in fact, is the reason for this construction, why construction was underway on that taxiway at the time, why the normal taxiway from 26 to 22 was closed, and thus requiring the unusual and visually discontinuous path from 26 to 22.

During the construction, with the red path closed, one can imagine the dominating visual cues (aside from signage) for the pilot and co-pilot.

When the aircraft arrives at the point indicated by the white arrow (image above), what the pilot sees depends on whether the red taxiway is open or closed.

A. Normally, the red path is open and pilots in aircraft arriving to the point (white arrow) are faced with a decision point:

- continue forward to the next runway (22), or

- turn left for takeoff from this runway (26)

The perceptually dominant visual cues (those comprising the totality of the environment), visual cues OTHER THAN signage, indicate that the aircraft has arrived at a decision point, a fork in the road.

B. On the other hand, if the red taxiway is closed, then pilots arriving at this point see no options, no choice to be made, no decision point. The perceptually dominating environmental cues (that is, the totality of the environment), visual cues OTHER THAN signage, indicate that the aircraft has arrived at a terminus.

Arrival at terminus is the perceptually dominant condition at B, a radically different perceptual condition than A. Condition A induces, unavoidably, a decision state (proceed forward, or stop here). Condition B presents no decision point.

The radical difference between A and B is that B provides no inducement for the pilots to engage/use their own human perceptual attention-focusing mechanisms. We engage these mechanisms, all of us, the same way pilots do. We’re all human. We’re all mammals, sentient beings and so on. We engage our attention focusing capacities in order to solve a problem, and support a decision.

Problem: I need to make a decision (continue straight on, or stop here and turn left for takeoff), and I need information to support my decision. So I engage my attention focusing capacity and seek the information, from the environment, that tells me what I need to know.

When you think of it that way, the brutality of this situation (B.) becomes very clear indeed.

The pilots not only:

- find themselves at a terminus, and therefore with no decision to be made, and therefore encountering no impetus for engaging their attention focusing capacity in support of a decision, because the dominating perceptual condition indicates no requirement for decision to begin with. There’s nothing to figure out, so nothing to pay attention to.

In fact it’s even worse, more brutal. The pilots in this situation are expected to imagine, without any prompt for the need to imagine it:

- that they are not at a terminus, although it looks like one

- that they are in fact at a decision point requiring a decision although the dominating cues suggest otherwise

- that the correct decision involves imagining an otherwise invisible sequence: first a left turn, then progression for an unknown distance, and then a right turn onto the correct path to the correct runway.

Does that sound simple?

It’s this complicated: pilots arrive at a decision point that does not appear to be a decision point, and so with no awareness of any need to make a decision, they imagine the need to do so, and then proceed to auto-generate a large number of possible decisions anyway, all in the perceptual dark. You see where this goes: immediately to absurdity.

I said I’d keep my opinion to myself but I changed my mind.

Those responsible for managing this airport were incredibly unimaginative, really completely unable to put themselves in the perspective of a pilot. It’s not hard to recognize that closure of the taxiway to 22 (the red path in the diagram) creates precisely this extremely dangerous situation. With a critical decision point camouflaged as a terminus, pilots are put into a perceptual abyss without mitigating countermeasures for the inevitable confusion, without extraordinary signage/wayfinding.

Pilots were put into this situation during their preflight checks, with their attention focused intently already, on a wide array of essential tasks.

But it gets worse:

There had been incidents over the years at Lexington Blue Grass where pilots ended on runway 26 instead of runway 22 but the mistake was caught in time. The mistake was happening often enough that the airport was, at the time of the crash of Comair 5191, in the process of changing its runway and taxiway layout to prevent just that type of accident from happening. In fact, the construction to make the airport safer was actually halted by court order to preserve evidence for the lawsuits that followed the crash.

https://www.reddit.com/r/aircrashinvestigation/comments/mtjo1k/comment/gv5ss5u/?utm_source=share&utm_medium=web2x&context=3

So, take a minute to think about that. The airport management put pilots into situation B without imagining the danger of it, precisely at the time that the taxiway to runway 22 was being reconstructed specifically to prevent the being on the wrong runway confusion that was commonly reported previously at this airport EVEN IN SITUATION A.

Immediately after the crash, this attention-focusing sign was installed at the start of runway 26:

Aircraft would have to run over it to miss it, an appropriate degree of way-finding disambiguity for use in the perceptual abyss of a fork in the road disguised as a terminus. Also an appropriate degree of respect for pilots, for their task load, and for the limits of human perception.

The lighted X remained there until reconstruction of the taxiway to runway 22 was completed:

Let’s go back to gorillas.

What if you’ve seen the gorilla video before? You’re already prompted to look for gorillas. Will you still fail to see the gorilla, in a second version of the basketball video? Of course not. But the problem is not solved. Here’s version 2 of the gorilla experiment video. Whether you’ve seen the original gorilla video before or not, watch it, and as with the original, focus on counting the number of basketball passes between the players in white shirts:

Fascinating, isn’t it?

If you’d like to think more about it, Jordan Peterson’s discussion is on target. He gets to the essence of it:

More discussion by Daniel Simon:

Why overlook the obvious, right? This post dwells on what otherwise seems obvious. But what seems obvious, usually isn’t:

We intuitively believe that the mechanisms of attention will automatically bring to focus things that matter to us, and it turns out that intuition is dangerously wrong

Daniel Simon

As promised:

...a collection of examples of focused attention and failures of attention, of difficulties in achieving attention, and then, significant risks of communication failure even when attention is achieved, the built-in limitations of human attention, and the necessity of attention nonetheless. If you read this post to the end, I think you'll appreciate attention in ways that may not have occurred to you before.

There are software development implications for modeled environments (metaverses, digital twins, CADs and BIMs):

- a framework is required for calling attention to and formulating communications that can be understood. The call to attention is the start of any formalized communication structure.

The following is description of a proposal for development of attention-focusing "TGN rigs" within digital modeled environments in industrial domains like mechanical engineering, and the AECO Architecture, Engineering, Construction and Operations industry.

TGN: A DIGITAL MODEL INTERACTIONS FORMAT STANDARD

Modeled environments are extremely complex oceans of information, and the methods/techniques for interpreting them have evolved, to date, inadequately. There are various things like ML and AR/MR and so on, but a generalized approach to sense-making in complex digital environments remains underdeveloped.

This document is a software development specification that addresses the problem. The purpose of the proposed development is to make things clear through ATTENTION-FOCUSING rigs, TGN rigs, within modeled digital environments.

Improved mechanisms of attention-focusing interactive close study of digital models (including digital twins), through TGN rigs, make user engagement with complex data more effective, more interactive, more clarifying, more communicative and expressive, and more revealing of insight. TGN might even bring the fun back into serious technical work by elevating the level of interpretive engagement in digital media.

It also provides a framework for further interpretation by machine cognition and human interaction with cognitive systems, applied against spatial digital models, via deepQA apps.

TGN SPECIFICATION DOWNLOAD

TGN: a digital model INTERACTIONS format standard (Apple Book)

TGN DISCUSSION AND DEMONSTRATION VIDEO PLAYLIST:

0 1 TGN: rigging for insight https://youtu.be/CGXrk9nGj0Y (2:16)

02 TGN: what is TGN exactly? https://youtu.be/byIW0T8MCsk (5:35)

03 TGN: demonstration https://youtu.be/wTh2AozTHDc (3:40)

Self critique of this demo is here:

04 TGN: portability https://youtu.be/Je859_cNvhQ (5:17)

05 TGN: industry value https://youtu.be/Ka0o1EnGtK4 (9:27)

(the dev platform I mention in the videos is iTwins.js, but TGN can be developed on every platform where TGN is useful and desired)

The industry doesn’t need great new features (nor old features packaged in a very effective new way) siloed in yet another new app. What it needs is a framework for attention-focusing rigs (TGN rigs) within modeled environments of all kinds, with portability of rigs from app to app, platform to platform.

There should be a TGN standard core that’s managed across vendors to support cross platform TGN expression with reliable fidelity. Above the TGN core there can be domain and app-unique TGN enhancements that support TGN rig special functions unique to a domain or vendor app constellation. TGN should ride both waves: a standardization wave and a differentiation wave. The standardized core (ever-evolving), creates a lot of opportunity for a diversity of new differentiation, in existing apps and platforms, and for new apps. Even, I’d say, new apps founded on TGN functionality. Anyone doing this will benefit from the TGN standard core.

Other articles:

https://tangerinefocus.com/2021/11/18/the-future-of-technical-drawing-rev-1/ – a short summary of TGN rig features (including the built-in viewing arc plus the rest of what comprises a TGN rig)

https://tangerinefocus.com/2021/11/09/tgn-a-framework-for-further-interpretation-by-machine-cognition-and-human-interaction-with-cognitive-systems-applied-against-spatial-digital-models-via-deepqa-apps/ – this is for those who want to look further, at what can happen AFTER attention-focusing TGN rigs are in use clarifying models