This is the text of my talk at BIM Coordinator Summit 2022 in Dublin. A link to the video recording is here:

I’ll talk about the techniques we, all of us, use to interpret and understand complex spatial environments. What’s the FORM of our ENGAGEMENT with real and digital space, with complex visual spatial worlds/realities?

I hope that by the end of this talk you have an answer that’s useful to you.

The first thing to recognize is that our engagement with reality has a particular FORM.

This is my topic.

It applies alike to digital models, BIMs, digital twins, Omniverses, NeRFs, point clouds, and what have you, &c. And definitely it applies in the real world too, walking around in everyday life.

I note at the beginning, it applies no matter your philosophy of modeling:

- In the case that you set out to model to perfection and totality, your philosophy: the model perfected and complete: total modeling

And it applies:

- In the case that you set out to model ENOUGH to satisfy some purpose: “the model good enough” philosophy:

- During DESIGN, a model good enough to declare your design adequate

- During CONSTRUCTION, good enough to communicate what to build and to some extent how

- During O&M, good enough to assist with operations and maintenance

Whether your philosophy is “perfected total modeling”, or, “good enough modeling”, our visual/cognitive form of engagement with models/worlds/places/realities/environments is the same.

Alright? So let’s go. There’s a lot to cover.

See this?

This is an attention-focusing rig in a garden.

I know, its a cat, but it moves like a camera rig, focusing its attention, with intensity.

I think it’s fair enough to call a cat an attention-focusing rig in a garden.

Picture yourself as the cat.

Be the cat.

What draws your attention?

What prompts your engagement?

The patch of tall grass you like lying in over there? Some curious motion, some bug flying by to eat, some field mouse to chase and pat around…

This focus, this attention drawn, is a major part of our form of engagement with space/reality.

For us.

For cats too.

I made a short video. It’s about 90 seconds. It’s about people in another kind of garden.

While you look at it, think about how we engage with a place. I tried to capture that with the video. I try to show something about the FORM of engagement with a place. See if you can see it. Maybe it works. See if you develop some understanding of the place like we did:

The form of engagement

Like the cat in a garden, our attention is drawn to certain moments that attract our interest.

We don’t commit to memory and record all the visual information that pours into our eyeballs. We ignore most of it. Cognitive effort is put instead into selecting (somehow) what to focus on, and storing memories of those acts of focus.

We can classify two kinds of attentive focus employed as we walk around rocky cliffs and swim in an octopus’s garden:

- We view the wider expanse of the place for an overall impression, to get the lay of the land so to speak, an expansive way of looking; for overview. It produces a mental model of the whole environment. But the model has many gaps and it’s fuzzy. If you doubt that, recall a mental model of anything familiar to you (say, your bicycle), and check it for accuracy and completeness.

- We narrow our focus to certain moments that draw our attention to where we’re motivated to focus (for whatever reasons) to look more closely for sharper impressions. These hold in the memory together with the wider overview.

Why are we drawn to certain moments and not others?

Who can say?

Reasons vary.

Do you live with another person? Have you figured out what draws their attention and why? Yes? Well lucky you!Motivations for attention vary, and many are less than straightforward. Some are easy to figure out though:

- at the sea coast rambling around the edge of high cliffs, attention better be paid; a wise investment of cognitive effort indeed.

- settling at some safe spot down by the water, the prompts for attention might be be less direct.

- aesthetics? Maybe we gaze on the form of large rocks, maybe we’re architects sampling natural form, daydreaming for inspiration.

- maybe it’s the bright green that grabs us, the surprising sea life in pools.

Whatever draws our attention, for whatever reasons, here’s the point:

The wider expanse (our overview impression) and the more sharply defined moments that draw our attention through narrower focus, all of these hold in the memory, and get stitched together in some kind of mental framework.

That framework is some kind of INTERPLAY dynamic, some kind of cognitive process where we consider each attentive moment not in abstraction, but with reference to all the other moments, wide and narrow, expansive and narrowly focused. There is some kind of ping-ponging back and forth, in consideration between all of these:

- a contextualization of each narrowly focused moment within the wider expansive overview,

and at the same time:

- a progressively intensifying clarification and filling out of the wider overview, through the lens of each moment of narrowed attentive focus

THIS is the FORM of our ENGAGEMENT:

The Wide, the Narrow, and the INTERPLAY:

Am I’m describing the basic observable dynamic of thought itself?

Maybe.

A good argument can be made for that.

If cats could talk we could ask them. Thinking is INTERPLAY. And through thinking, we develop UNDERSTANDING. Which of course becomes even more useful, AS THINGS BECOME COMPLEX.

How do we understand complex things like rock cliffs, octopus gardens, BIMs, digital twins, NeRFs, Omniverses…, &c?

Do we try to grasp, or mentally eat them up, whole? Whole oceans of information, whole environments at once? Do we gulp down the whole chicken?

No.

We develop understanding through a dynamic INTERPLAY that builds a kind of “energy state” structure. The focused moment clarifies and fills out the wider overview while at the same time in reverse, the wider overview imbues the narrower moment with meaning.

Understanding starts out superficial but increases as we engage, and continues to increase as we continue to engage in this INTERPLAY.

As cognitive energy (thought) is put into the INTERPLAY, and the play continues, the energy in the system gets bumped up to a higher electron shell level, metaphorically.

The more you exercise the INTERPLAY, the better the understanding for some purpose.

So what’s that got to do with software, and technical domains like design and construction, architecture and engineering?

It’s obvious.

What’s the purpose of digital media, in technical fields?

- to assist the development of understanding.

To do that, digital media has to:

- facilitate and amplify the INTERPLAY (bump up the cognitive energy to a higher shell level)

Look:

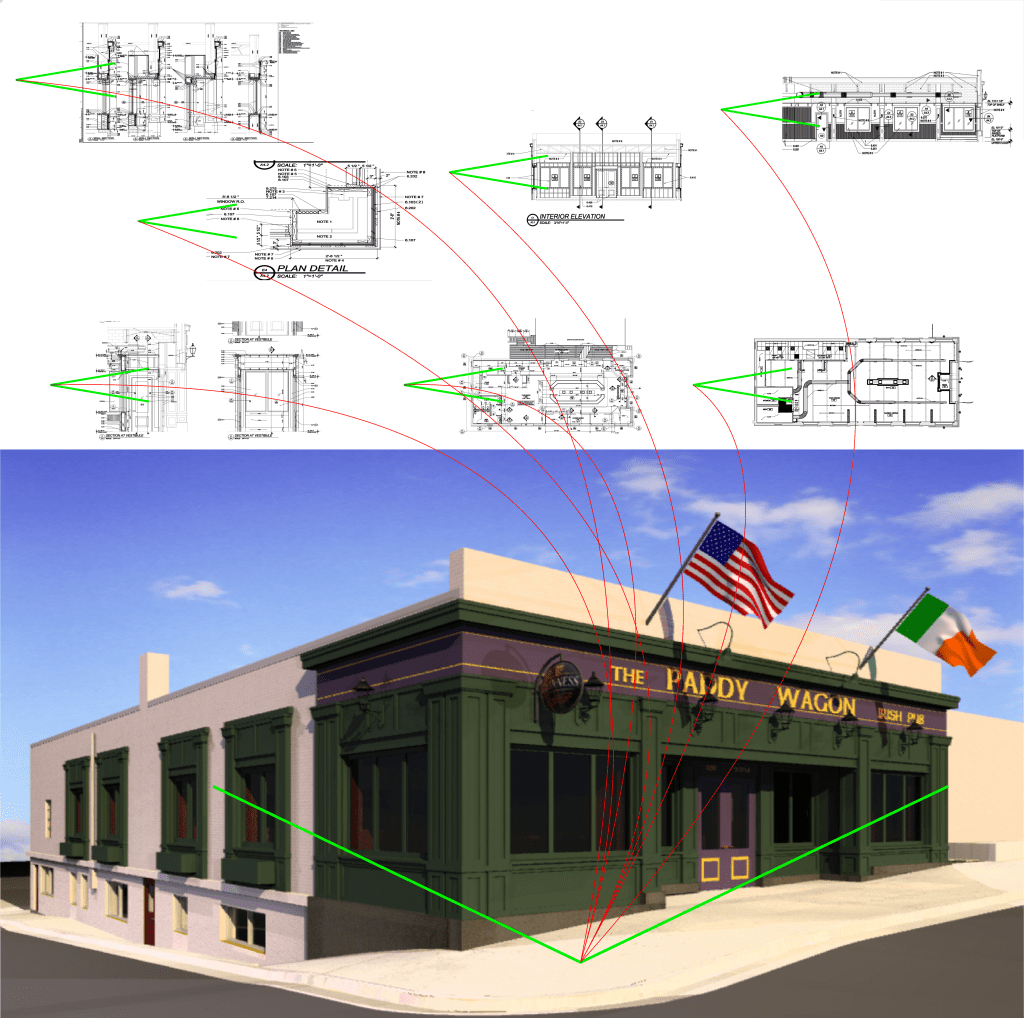

This makes sense, right? The Wide, the Narrow, and the INTERPLAY, the FORM of ENGAGEMENT that builds understanding:

So, similarity in the diagram below? The Wide (the model), the act(s) of Narrowed attentive focus (the drawings), and the INTERPLAY as engine of interpretive understanding:

Even so, for 20 years now, and you still hear people saying:

“Get rid of drawings. The model is everything.”

Yeah. Sure. So now you have no attentive focus and no INTERPLAY, no engine of interpretive understanding.

And no confidence* either. Confidence is another part of understanding. It deals with the affirmative capacity, to assuage specific kinds of doubt, or irritation, like:

- is the model good enough? Is it “done” yet?

- do I understand the model adequately (beyond superficially) for some purpose? Is the INTERPLAY well engaged?

- is everything that should be here, here?” (narrow, at a moment of focus)

- Can I affirm to others that all that should be here, IS here, that nothing that matters is missing, here? (narrow, at a moment of focus)

Anyway, as a maker of digital models and construction drawings, these were some of the things that occupied my brain, pretty much continuously.

Here are some of the projects I worked on. Take a browse through my old memories if you like:

Around 2007 or so I noticed this INTERPLAY I was doing, all the time, as everyone does. I realized while building the models, that to answer the most basic questions about them, it was always necessary to look at them through the lens of moments of narrowed attentive focus, otherwise known as “drawings”, which, since around 1998 I’d been automating from digital models.

The drawing graphics fell out of the model (they were driven by the model). That was the mantra back then. But the mantra missed the point. The important thing was this:

those drawings helped me understand the model.

This was dependency.

Without the drawings, the model — which, people not in this field don’t know, can take months, or years, of full time elaboration, by a team of people. Working a lot of overtime to do it too — (but) already in the early first days of a project’s modeling, the model surpasses and overwhelms our cognitive grasp.

As it does, then we rely on technique to handle it, to make sense of it. We rely on a form of engagement with it. Without the drawings, without the narrowed attentive focus, the model eventually becomes unintelligible, or only superficially intelligible, just an ocean of information to swim forever in and get nowhere.

The opposite was (is) also true: I don’t understand the drawings either, without the model.

I don’t understand the model, or the drawings, neither one without the other.

Standing on their own drawings might nicely serve as abstract art, but standing alone they’re functionally meaningless. They become meaningful only when I see them (in my mind’s eye) where they really are IN THE MODEL. The model in fact is the only thing that imbues them with meaning.

So this is interdependency.

It’s a drawing-model fusion that we’re engaged with, a cognitive INTERPLAY. That’s no surprise because, that’s how our minds work. It’s the nature of thinking, looking, understanding.

What was a surprise was the software. Specifically, software’s lack of assistance with the essential cognitive interplay.

Although I was an enthusiastic user of supposedly advanced software and methods, the software left us performing this INTERPLAY as a mental exercise only, wholly unassisted by digital media.

That just seemed stupid to me. Because the exercise, in technical fields, is highly complex, and digital modeled environments have precisely the capacity to assist with this, if anyone would simply have thought to do it.

In their 1938 book, The Evolution of Physics, co-authors Albert Einstein and Léopold Infeld wrote:

The formulation of a problem is often more essential than its solution, which may be merely a matter of mathematical or experimental skill. To raise new questions, new possibilities, to regard old problems from a new angle requires creative imagination and marks real advances in science

https://en.wikipedia.org/wiki/The_Evolution_of_Physics

The formulation of a problem is often more essential than its solution.

I wrote to the software company that I was a fan and customer of. I wrote about this fusion idea, that the drawings, embellished with clarifying notes and graphics (as they are), ought to be visible where they really are at their true orientation within the models. And that their software should automate this, because, it would help us understand models and drawings better at the same time, would make our models more clear, accessible, and useful, to more people, and the drawings too.

They hired me to lead a team to design and implement what I proposed, automated drawing-model fusion. a boon to INTERPLAY, a cognitive assist to understanding, and the opening of an important DOOR to the future of digital media and our form of engagement with it.

Our team released that innovation in commercial software from Bentley Systems (MicroStation) in May of 2012,

10 years ago.

I have some videos here from back then, of our automated drawing-model fusion in MicoStation, some YouTubes I made in 2011 or so. Check them out if you’re interested:

I say this opened a door, and since then, 10 years gone, 9 different software companies that I know of automate this fusion.

Drawing-Model Fusion Automated:

- Bentley MicroStation (2012)

- Graphisoft BIMx (2013)

- Solidworks (2015)

- Dalux

- Revizto

- Morpholio and Shapr3D (together)

- Tekla (2020)

- Autodesk Autodesk Docs (2022)

So we went through this door, and what we see behind us is 10 years worth of development that can all be called generation one (v1.0), of articulations of narrowed attentive focus within digital modeled environments.

Well that’s the past? What’s ahead?

I’ve been down this road before and I intend to do it again. And do it better this time;

The TGN rigs I propose solve THIS problem:

the requirement in all modeled environments for a standardizable FORM of ENGAGEMENT with models that builds more thorough understanding faster, that draws focused attention to things that need to be understood, and provides both CLARITY and CONTROL over communication about very complex situations in very complex models.

This time, the fusion is designed to be MORE effective, MORE communicative, more expressive, more engaging, more interactive, more compelling (likely addictive), easier to use, and far more useful, a much stronger assist to thought and understanding of very complex situations in very complex models.

And this time around, in v2.0, “TGN” as I call it, the industry can avoid silo-ing attention focusing rigs within app, platform, and format silos… Attention-focusing rigs can be developed with a community-managed standardized core feature set that ensures shareability across app/format and platform boundaries with adequate graphical fidelity.

Here’s a demonstration of TGN rigs created and viewed within a modeling app, then shared with someone using a different app.

A Triple Fusion:

TGN is intended as an open standard form of engagement with models. TGN enables a more expressive and communicative articulation of attentive focus within modeled environments of all kinds, at every phase of model development and use.

TGN is a triple fusion of:

- modeled environments (of any kind)

- technical “drawing” (as attention-focusing rigs IN digital worlds)

- techniques of camera control from the history of film (who’s better at techniques of looking atthings?)

Regarding the latter, camera control and the history of film, there are some GREAT examples here:

Take a look. It’s well presented, and more importantly, its a glimpse into a critical part of the way we look at things, the techniques we have for doing that, specifically involving the use of cameras and their motion. Serious business, not child’s play.

The narrator of the film history clip says at the end:

…camera moves, in film, combining informational control and emotional positioning; movement becomes the director’s editorial voice.”

https://youtu.be/yLHNBssyuE4

And, this is applicable in technical domains like engineering, architecture, and construction, far more than many might first imagine.

TGN will make digital worlds more effective, useful, and engaging for architects, engineers, builders, and facility occupants and operators.

TGN is NOT, yet another, software product.

It just makes the software you love, better.

TGN is proposed as an open standard available to all software developers, for use in all apps and platforms, commercial and open source.

If you’re a developer, or a user, and you want TGN features in your app/platform, here are some resources:

TGN Resources:

TGN functional specification:

TGN: a digital model INTERACTIONS format standard (Apple Book)

TGN: a digital model INTERACTIONS format standard (ePub)

TGN: a digital model INTERACTIONS format standard (iCloud)

TGN: a digital model INTERACTIONS format standard (PDF)

I intend to reformat this on GitHub, for open access editing.

TGN demo videos:

TGN Sunburst diagram:

Attention-Focusing Rig (AFR) possibilities are diverse. Each industrial, creative, technical domain and discipline has its own unique characteristics and needs, and each has tremendous diversity in existing applications and platforms, with a wide range of different graphical capabilities.

That being the case, there is no single correct and definitive AFR specification possible, not one that encompasses either what’s needed from each domain, let alone what’s possible, springing from each.

And yet, it is conceivable that each developer implementing the AFR concept on each software platform may at once be:

- completely free to innovate all possible AFR features that may be discovered by each developer in evaluating their user requirements. In the diagram I hint at these. They’re colored blue, and I sample just some of those possibilities and name/describe them in the diagram.

And yet,

- developers may conform to and and take advantage of a set of community-managed, and open source, standard features at the core of AFR. The standardized core features I refer to as TGN. TGN is colored orange in the diagram:

Just a quick note since I just saw this on my LinkedIn feed: AtomIFC https://github.com/QonicOpen/AtomIfc looks to me like an important facilitator of a reliable models gateway, which is exactly what developers would be looking for in implementing the TGN rig ID/Admin features. See the segment at 5:00 in the diagram:

Things you can do

To get TGN, if you want it, there are things you can do:

- contact me with questions, or

- do you believe AFR/TGN is no good? No problem. Contact me and tell me that too.

- if you’re interested in setting up a community-managed open source TGN core developer community, let’s discuss it

- if you’d like my help designing AFR/TGN features in your app/platform, contact me

Message me on LinkedIn https://www.linkedin.com/in/robsnyder3333/ or email me here:

Well that’s me (also my cat) in the conference magazine:

https://issuu.com/www.bimcoordinatorsummit.net/docs/bcs22_magazine/102