This idea is colossal in the history of errors:

“there will be no drawings in 5 (or 25) years.”

– the paragidm

Of course this (the paradigm) is premised on the idea that drawing is the predecessor of digital modeling, that digital modeling succeeds drawing, makes it obsolete, replaces it.

But here’s the error. Digital modeling is not the successor of drawing;

digital modeling is the successor of:

(drumroll…)

mental modeling (and its companion).

Modeling (of any kind) is as related to drawing, as “world” is to “focused attention”.

Different things.

Interrelated fundamentally.

Neither one precedes, nor succeeds, the other. They’re categorically different things, and intrinsically intertwined.

And both evolve:

- Models evolve from mental models (only), to digital models (as companion to mental models).

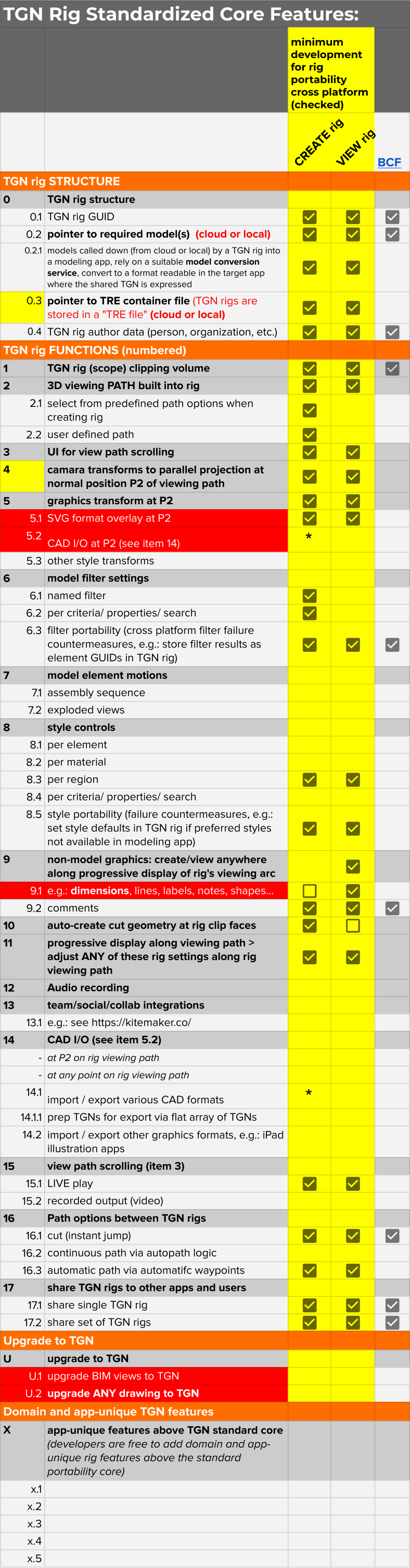

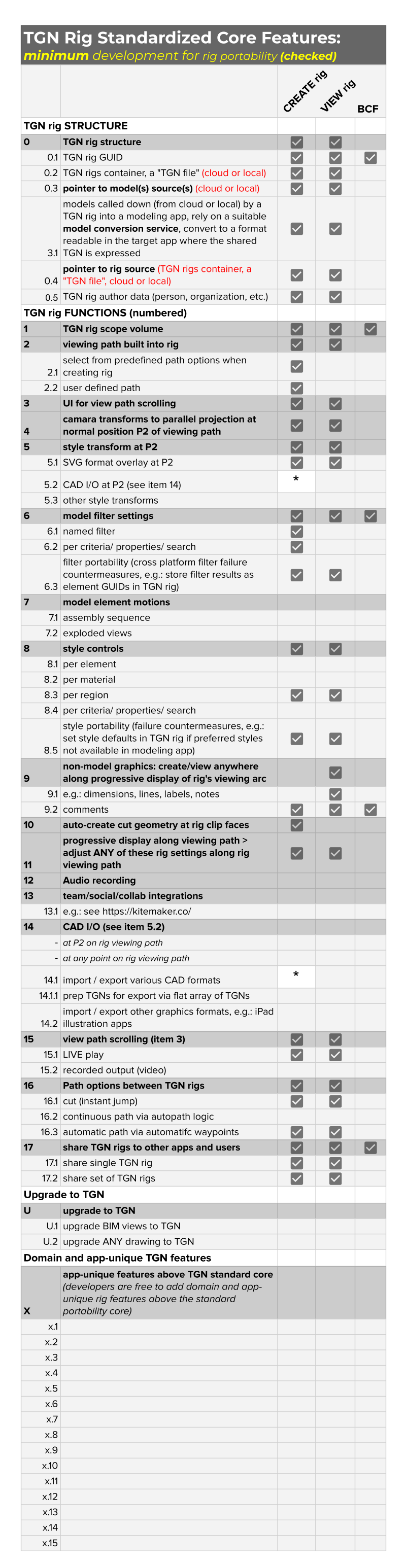

- Drawing evolves from 2D (CAD or hand drawn) to TGN attention-focusing rigs within digital models

I wrote a software development functional specification that’s counter to THE PARADIGM. Download links to the spec are in these two posts, with discussion:

We’re 25 years now into a paradigm (a set of assumptions, theories, intellectual frameworks) within which this idea, the counter-paradigm TGN, cannot be heard.

It falls on deaf ears among adherents to the paradigm (largely software developers and marketers) even as it has abundant support among software users and in plain logic and evidence.

That’s a typical situation.

But I won’t give up.

Many years ago, Thomas Kuhn wrote a book on The Structure of Scientific Revolutions, in which he argued that science does not advance through the accumulation of new discoveries and information. Scientists are not always and forever refining their repository of facts about the universe. Rather, scientific views change in fits and starts, through a kind of punctuated equilibrium.

Researchers agree on a basic set of assumptions and theories about the nature of their subject and the purpose of their work. These assumptions and theories, taken together, constitute a paradigm. Paradigms are simply intellectual frameworks, comparable to the environmental models your brain constructs on the basis of sensory information. All paradigms are necessarily imperfect, because natural phenomena are of untold complexity and our knowledge is very incomplete. Nevertheless, reigning paradigms are favoured because of their explanatory power; they fit the evidence and the research well enough, and they guide what Kuhn calls “normal science” – everyday research and inquiry within the paradigm, which aims to refine reigning theories and fit them ever more closely to reality.

Here and there, there are anomalies which the paradigm cannot explain. Researchers engaged in normal science will ignore or downplay these anomalies as long as they can, because they cannot be understood or processed with the intellectual tools that their paradigm grants them. These anomalies require a new paradigm, a different set of fundamental assumptions, and this is inconceivable, until there are so many anomalies, that the reigning paradigm is discredited and the field enters a crisis. It is at this point that you end up abandoning the miasma theory for the germ theory of disease, or setting aside the geocentric solar system for a heliocentric one.

Paradigms, then, not only make interpretations and predictions. They also establish the kinds of questions it is appropriate to ask, and how these questions are to be answered. When you are inside of a paradigm, it does not seem so much true, as unquestionable, or even invisible. This accounts for the strange ability of theories almost to make reality, and to form closed, inviolable worlds of thought unto themselves. Any set of data and observations can support multiple hypotheses, but under the spell of a theory, you see in the data only confirmations of what you already believe. Contrary, falsifying proofs don’t even seem disqualifying, so much as boring or bizarre, and above all unimportant.

Kuhn elaborated his concept only in the context of the sciences, but it is plain that paradigms govern everything, from political discourse to the study of Shakespeare. The sustained study of natural, historical or literary phenomena, doesn’t make you smarter or better at understanding the world. As the sophistication of theory and interpretation increases, the scope of inquiry narrows, and the possibilities for self-deception and absurdity only multiply. Hence the familiar jokes, about the ridiculous ideas that only someone with a doctoral degree could propagate. …There are errors and mistaken interpretations to which low-information observers are subject, but high-information, critical thinkers also build intellectual worlds that are subject to deeper, harder errors, and these people will never be convinced they are wrong.

Kuhn and others have noted that scientific knowledge does not advance so much by discovery, as by the deaths of prior scientists:

Copernicanism made few converts for almost a century after Copernicus’ death. Newton’s work was not generally accepted … for more than half a century after the Principia appeared. Priestley never accepted the oxygen theory, nor Lord Kelvin the electromagnetic theory, and so on. … Darwin … wrote: “Although I am fully convinced of the truth of the views given in this volume … I by no means expect to convince experienced naturalists whose minds are stocked with a multitude of facts all viewed, during a long course of years, from a point of view directly opposite to mine … ” And Max Planck, surveying his own career in his Scientific Autobiography, sadly remarked that “a new scientific truth does not triumph by convincing its opponents and making them see the light, but rather because its opponents eventually die, and a new generation grows up that is familiar with it.”1

One of the biggest problems here, is that the error and the source of the error are not the same. People are most demonstrably wrong in their conclusions, but they arrived at these wrong conclusions via a broader intellectual framework that they leave mostly unstated, and that isn’t even subject to ordinary falsification.

1 Thomas Kuhn, The Structure of Scientific Revolutions, pp. 149–50.