This form of visual engagement with models should run in software for all kinds of spatial visual digital models. Emphasis on all kinds.

We can list many kinds of models but think of any kind of digital model and I include that kind. A few for example: (digital twins, BIMs, photo or scanner generated models, AI or otherwise computationally generated, or NI (natural intelligence) generated…

How could this become a standardized form of engagement accessible in all modeling and model-handling apps and platforms?

Through Open Source:

TGN is CODE you already have in your modeling app. It’s just not packaged together there for coherent function for visual engagement. TGN is about making that function easy, accessible, and clarifying. And it’s also about making that portable, so once you have it, you can share that into other modeling apps and platforms.

I’m asking software companies and independent developers to join a project to do this development together open source for the benefit of all modeling apps and their users. TGN will improve how people engage with models, making it easier for more people to make better use of them.

It’s also a new base for further innovation in this direction for anyone who wants to add/extend/differentiate.

The 8 TGN Core features shown in the video at the top of this post, and in the diagram in the TGN OPEN CODE post, are the 8 core features of the proposed TGN Core:

Many more features supporting an Attention Focusing Rigs (AFR) concept are possible to the extent anyone can imagine them. A bunch of possible additional features are outlined in a TGN Specification document I wrote. Download links here:

TGN Rigs, rigging models for insight, clarity, interpretive power, communication

The TGN developer spec is for free to anyone who wants it. Download:

TGN: a digital model INTERACTIONS format standard (Apple Book)

TGN: a digital model INTERACTIONS format standard (ePub)

TGN: a digital model INTERACTIONS format standard (iCloud)

TGN: a digital model INTERACTIONS format standard (PDF)

TGN is proposed fundamentally in support of human engagement with models for interpretive and generative purposes, in recognition of fundamentals of the way human engagement and perception of a spatial visual environment actually works.

But this can be extended. TGNs within models, when in use, could be extended specifically to act as gateways for human-in-the-loop input back into AI and other computational iterative model generating systems.

“We need human input to confirm that AI is driving us in the right direction…”

KIMON ONUMA

If we need to strengthen human engagement with (AI or naturally generated) models (we do), then we should develop better equipment for doing exactly that, better equipment in models, for human engagement with models.

TGN supplies an optimal access point for such controls. We see the need for this in any kind of intelligence-generated modeling (A or N, artificial or natural intelligence).

In nuclear power plants, control rods provide control over processes that otherwise will tend to run OUT OF CONTROL.

So, it’s an apt analogy for interpretive and generative processes that tend to run out of control and therefore require equipment specifically designed to supply that control.

CONCLUSIONS:

- Digital tools are an extension of human perceptual/cognitive equipment for engagement with models (real world or digital) for interpretive and generative purposes.

- Digital tools need to further advance in support of this perceptual/cognitive equipment.

- This need is evident whether models are generated by natural or artificial intelligence

- The equipment provided to date in modeling software is inadequate/underdeveloped. This inadequacy is the greatest single reason that technical drawing still supplies the majority of revenue for major commercial modeling software developers, still in 2023 after decades of modeling.

- TGN is an open source software development proposal intended to raise the adequacy of the relevant equipment within all modeling software (or model-handling software, all kinds).

- Commercial and independent software developers are invited to join a project to make this happen. Contact me if interested.

- TGN is a minimum feature set that

- a) will make a difference and

- b) corresponds to the way human perception works in modeled worlds (real or digital).

- But TGN can be extended and added to, to the extent anyone can envision. The extensions and additions need not be open source.

- “Control Rods” for human-in-the-loop input back into AI and other computational iterative model generating systems, for human guidance, for the laying down of control parameters, control markers, control drivers, within models, for feedback back into model generating systems — you understand the idea? — are optimally hosted within TGN rigs, within models.

You can see why, right? You can imagine the development of a tremendous variety of such controls, and the fact that these would be made very easily accessible, visible, close at hand, and intelligible, within the context of these rigs.

Let your imagination loose. What control rods would you embed there to guide generative (AI or NI generated) development of the model?

More on this development proposal coming up. Stay tuned. And, don’t wait either. Contact me if you’re interested.

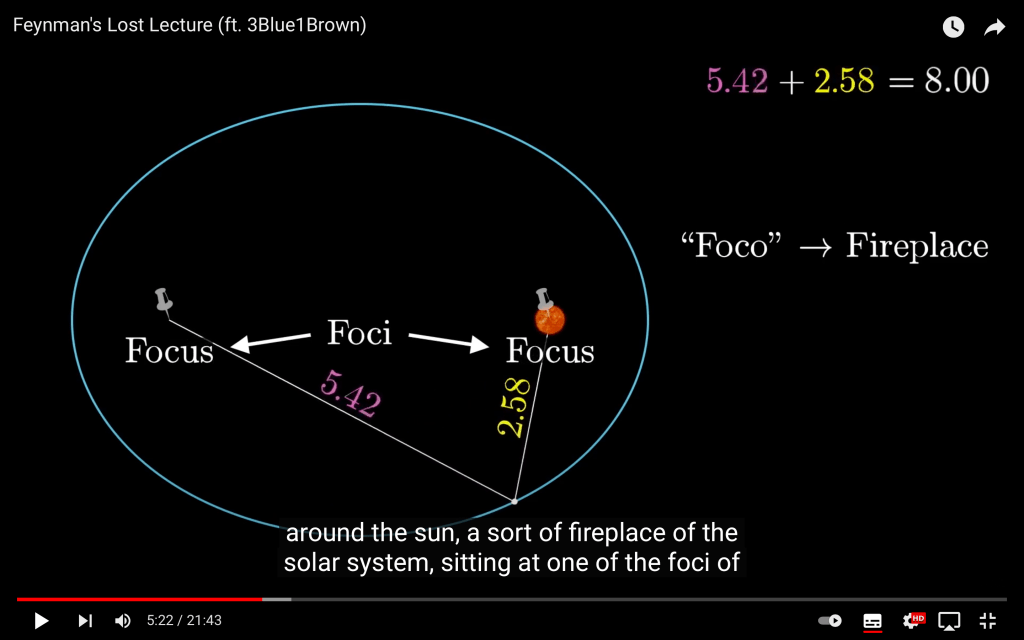

04:55 …”so the defining property of this curve is that when you draw lines from any point on the curve to these two special thumbtack locations, the sum of the lengths of those lines is a constant, namely the length of the string. Each of these points is called a “focus” of your ellipse, collectively called “foci”. Fun fact, the word focus comes from the latin for “fireplace”, since one of the first places ellipses were studied was for orbits around the sun, a sort of fireplace of the solar system, sitting at one of the foci of a planet’s orbit.

https://youtu.be/xdIjYBtnvZU